Introduction:

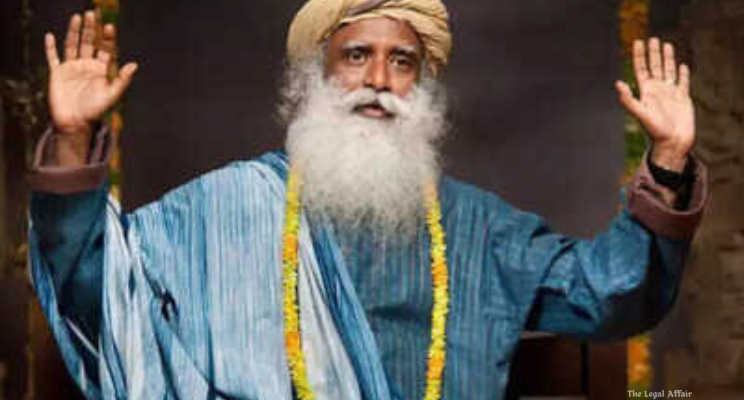

In a significant development aimed at strengthening the protection of personality rights in the digital age, the Delhi High Court has directed Google LLC to make a sincere endeavour to ensure that deepfake or misleading content infringing upon the personality rights of Sadhguru Jaggi Vasudev, the founder of the Isha Foundation, is identified and removed through the use of technological means. The order was passed by Justice Manmeet Pritam Singh Arora in the ongoing suit Sadhguru Jagadish Vasudev & Anr. v. Igor Isakov & Ors., following a previous John Doe and dynamic+ injunction order issued by Justice Saurabh Banerjee in May 2025, which had restrained rogue websites and unknown entities from misusing Sadhguru’s image, voice, and likeness through artificial intelligence or other digital tools. The High Court emphasized the need for collaboration between Google and Sadhguru’s representatives to identify misleading or defamatory content and explore the implementation of technological mechanisms that would automatically detect and remove identical or similar infringing material, thereby reducing the repeated burden of manual complaints.

Arguments on Behalf of the Plaintiffs (Sadhguru Jaggi Vasudev & Anr.):

The plaintiffs, represented by Senior Counsel Mr. Sai Krishna Rajagopal along with advocates Ms. Disha Sharma, Ms. Deepika Pokharia, Mr. Angad Makkar, and Mr. Pushpit Ghosh, submitted that despite previous court orders protecting Sadhguru’s personality rights, misleading and manipulated content continued to circulate across platforms, especially through YouTube links and Google Ads. They contended that these materials not only distorted his teachings and statements but also constituted a gross violation of his moral, commercial, and personality rights. The counsel argued that such content—often created using artificial intelligence and deepfake technology—misleads the public and damages Sadhguru’s reputation by misrepresenting his views and activities.

The plaintiffs highlighted that a John Doe and dynamic+ injunction order had already been passed by Justice Saurabh Banerjee on May 30, 2025, recognizing Sadhguru’s unique identity traits, including his name, voice, image, appearance, and style of articulation, all of which form integral parts of his personality rights. That order had directed search engines, social media intermediaries, and rogue websites to ensure that infringing or impersonating content was taken down promptly. Despite this, the plaintiffs stated that they had to continually identify and report new URLs containing similar misleading representations, placing an unnecessary burden on them.

The plaintiffs invoked Rule 4(4) of the Information Technology (Intermediary Guidelines and Digital Media Ethics Code) Rules, 2021, which mandates intermediaries to employ proactive technology-based measures for identifying and disabling access to identical information that has previously been taken down pursuant to a court or government order. They argued that Google, being one of the largest intermediaries, had a duty to comply with these obligations and deploy suitable technology to automatically track and remove identical or near-identical infringing content. The plaintiffs also cited Google’s own Ads Misrepresentation Policy, which prohibits misleading advertisements or materials that misrepresent facts, personalities, or organizations.

The plaintiffs further pointed to a specific YouTube link that grossly misrepresented Sadhguru’s image and statements, arguing that such content fell squarely within the definition of misleading representation under Google’s policy framework. They contended that Google’s inaction or limited compliance failed to effectively curb the spread of this harmful material, forcing them to repeatedly approach the court for redressal. Thus, they requested that the Court direct Google to proactively employ artificial intelligence tools and content recognition technologies to automatically detect, filter, and remove such misleading or defamatory content, thereby upholding the spirit of the earlier John Doe order.

Arguments on Behalf of the Defendant (Google LLC):

Appearing for Google LLC, a team of advocates led by Ms. Mamta R. Jha along with Mr. Rohan Ahuja, Ms. Shruttima Ehersa, Mr. Rahul Choudhary, and Ms. Himani Sachdeva, submitted that Google had already taken substantial steps in compliance with the previous court orders. The counsel informed the Court that all URLs specified in the earlier take-down order passed by Justice Saurabh Banerjee in May had been successfully removed. Further, additional URLs subsequently identified by the plaintiffs were also actioned upon, and the complete details, including the BSI (Basic Subscriber Information), would be provided within two weeks.

Google’s counsel emphasized that the platform had been responsive to the plaintiffs’ complaints and was operating within the legal framework laid down by the Information Technology (Intermediary Guidelines and Digital Media Ethics Code) Rules, 2021. They stated that Google’s systems already functioned under a “notice and takedown” regime, where content is removed once it is specifically identified and verified as infringing. However, counsel clarified that Google could not automatically block or remove content without explicit identification due to legal and technical limitations, especially considering issues of over-blocking or infringing legitimate speech.

Further, Google’s representatives assured the Court that the company would adopt a collaborative approach with the plaintiffs to address their concerns. The counsel indicated that Google was open to holding a mutual meeting with Sadhguru’s representatives to precisely identify categories of misleading content that violated Google’s policies or court directions. Once identified, Google would make every possible effort to ensure such content, including identical or similar videos or advertisements, was detected and removed through its technological infrastructure.

At the same time, the counsel for Google expressed certain reservations regarding the feasibility of deploying automated systems capable of identifying and removing all identical or similar content. They submitted that such systems have inherent limitations, particularly in distinguishing between legitimate commentary or fair use and defamatory or misleading material. Therefore, any directive requiring automatic detection must be balanced with technological practicality and the principles of free expression. Nonetheless, Google reiterated its commitment to cooperate fully with Sadhguru and the authorities to ensure that infringing content is promptly and effectively dealt with.

Court’s Analysis and Judgment:

Justice Manmeet Pritam Singh Arora, after hearing the parties, observed that the matter raised important questions about the enforcement of personality rights and the responsibility of intermediaries in the evolving digital landscape marked by artificial intelligence and deepfake technology. The Court noted that while Google had complied with previous directions and removed the URLs initially identified, the persistence of new misleading or defamatory content necessitated a more robust and proactive mechanism.

The Court emphasized that intermediaries such as Google, under Rule 4(4) of the Information Technology (Intermediary Guidelines and Digital Media Ethics Code) Rules, 2021, are expected to employ appropriate technological tools to identify and restrict access to identical information that has already been adjudged unlawful or infringing. The object of this rule, the Court noted, is to relieve aggrieved persons of the repeated burden of monitoring and reporting identical infringing content. Justice Arora held that in the context of Sadhguru’s case, where deepfake technology is capable of rapidly reproducing and spreading manipulated versions of the same content, this obligation becomes even more critical.

Accordingly, the Court directed both parties—Sadhguru and Google LLC—to hold a mutual meeting where the plaintiffs could specifically identify the nature and category of content that falls within the exceptions and prohibitions of Google’s own Ads Misrepresentation Policy. This, the Court observed, would enable Google to calibrate its technology-based tools more effectively for proactive identification and removal of identical or similar content.

Justice Arora further ordered that Google must make an “endeavour” to ensure that such misleading and identical content is removed through the use of its technological infrastructure, thereby reducing the plaintiffs’ onus of continuously identifying new URLs and seeking takedown orders. The Court clarified that if Google faced any technological or legal limitations in implementing these directions, it could file an affidavit explaining the nature of such constraints and seek appropriate modification of the order.

The Court’s order reflects an evolving judicial recognition of the challenges posed by AI-generated content and the need for stronger intermediary accountability. It builds upon Justice Saurabh Banerjee’s earlier dynamic+ injunction protecting Sadhguru’s personality rights, which had held that the enforcement of such rights in the digital era must remain effective and visible across both physical and virtual platforms. Justice Banerjee’s earlier order had recognized that Sadhguru possessed distinctive traits—his voice, attire, articulation style, and overall persona—that constituted protectable elements of his identity. Consequently, any unauthorized use or imitation of these traits, especially through AI tools, amounted to infringement of personality rights.

Justice Arora’s order complements this earlier framework by placing the onus of technological responsibility upon intermediaries like Google. The Court’s directive to adopt a “collaborative and technology-driven” approach represents an important step toward balancing the enforcement of individual rights with the regulatory framework governing digital intermediaries. It also signals a judicial expectation that large technology companies must utilize their considerable technological resources to mitigate the harms of deepfakes and misinformation, especially when they infringe upon a person’s identity and reputation.

By providing Google the opportunity to file an affidavit detailing any technological limitations, the Court acknowledged the practical complexities of automated content moderation, thereby maintaining a fair and balanced approach. However, the emphasis remained on proactive compliance and mutual cooperation to protect Sadhguru’s rights without unduly restricting legitimate online expression.

This judgment serves as a progressive judicial response to the growing menace of deepfakes, impersonations, and digital defamation, particularly when such acts target public figures. It underscores the judiciary’s role in bridging the gap between emerging technology and legal protection of personality and intellectual property rights. Through its directions, the Delhi High Court reinforced that technological advancement must align with ethical responsibility and legal accountability, ensuring that the digital ecosystem remains a safe and truthful space.